Contents

Contents

Scale Scrum to Multiple Teams

Deliver Larger Chunks of Work

Despite the growth of 3D printing, fully tested customer-releasable hardware designs cannot be delivered every sprint in most instances. Especially when a software team has to balance multiple projects, it often doesn’t make sense to provide a new version of software that fast, either. In some cases, the customers don’t want it that often! Also, many programs in larger companies require multiple teams to contribute to the same software code base and/or systems that include firmware and hardware. Some level of program-level testing is required in those situations to ensure top quality, requiring a stopping point in the development of each incremental version. In any of those scenarios, customers may want lead time to prepare for implementation. Two or three months of predictability may be critical to managing their expectations, and thus their satisfaction. Hence the Full Scale agile™ (FuSca™) version of “Release Planning.” Either a shippable product cannot be delivered every sprint, or for various reasons the business is waiting a number of sprints before releasing their deliverables all at once.

Despite the growth of 3D printing, fully tested customer-releasable hardware designs cannot be delivered every sprint in most instances. Especially when a software team has to balance multiple projects, it often doesn’t make sense to provide a new version of software that fast, either. In some cases, the customers don’t want it that often! Also, many programs in larger companies require multiple teams to contribute to the same software code base and/or systems that include firmware and hardware. Some level of program-level testing is required in those situations to ensure top quality, requiring a stopping point in the development of each incremental version. In any of those scenarios, customers may want lead time to prepare for implementation. Two or three months of predictability may be critical to managing their expectations, and thus their satisfaction. Hence the Full Scale agile™ (FuSca™) version of “Release Planning.” Either a shippable product cannot be delivered every sprint, or for various reasons the business is waiting a number of sprints before releasing their deliverables all at once.

A “release” is a set of requirements that take more than one sprint to complete, and the term is also used for the time period in which that set is delivered. In theory a single team could use releases, but in most companies larger deliverables require the output of multiple teams in what I call a “program.”

In FuSca, you can have two types of releases:

- Planning Release—Work done over a set number of sprints for purposes of predicting delivery, which may or may not comprise a Version Release.

- Version Release—Work delivered to a customer, generally tracked with a new version or model number.

Given the Agile Principle about rapid delivery, the preference obviously is to combine these—in effect, each Planning Release is also a Version Release. Even this is far from the ideal scenario for software-only products. Their goal should be an integrated development/operations (“Dev/Ops“) environment with continuous integration and automated testing. In other words, the most efficient software companies can release bug-free Version Releases multiple times a day, and Planning Releases are unnecessary for them. But for programs involving hardware, regulatory compliance testing, or other similar constraints, the different release types enable agile design.

Note that even though Scrum is the basis for this approach, teams do not have to be using Scrum at the team level. They just have to be tracking their work in chunks small enough to deliver in a month or less, regardless of what they call those chunks (user stories, work packages, tickets, etc.). And they need a metric of how many of those chunks they deliver consistently within that time period. So even self-directed teams using a variety of custom approaches can coordinate through this release planning process. If you are not familiar with Scrum, though, the process will make more sense if you first read, “An Overview of Scrum.”

Translate the Concepts

At the team level, Scrum allows multiple individuals to identify, plan, track, and report on their shared deliverables in a highly coordinated and transparent fashion. Full Scale agile does the same for multiple teams. This is referred to as “Agile-at-scale.” Though multiple software teams could release a new version every sprint, in every case I have encountered, the output of multiple teams has required multiple sprints given the firm’s existing structure.

Contrary to what you will hear from people with a more complicated system of release planning to sell you, Agile in general and Scrum specifically are easily scaled up in an organization, and cost nothing but time and discipline to implement. (I don’t claim to be the first to do it; I have mentioned Nexus and Large Scale Scrum or LeSS, for example.) Here are how the terms translate in FuSca:

| Concept | One Team | Multiple Teams |

| Time Period | Sprint | Release |

| Work Product | User stories | Epics |

| Velocity | Stories per sprint | Epics per release |

| Facilitator/Coach | Facilitator (“Scrum Master”) | Agile Release Manager |

| Planners | Team members | Guidance Roles |

| Planning Meeting | Planning Ceremony | Release Planning Meeting |

| Standup | Daily Standup | Joint Demonstration Ceremony |

| Demonstration | Demonstration Ceremony | Joint Demonstration Ceremony |

| Retrospective | Sprint Retrospective | Release Retrospective |

| Overcommitment Check | Capacity Planning | Velocity vs. Story Count |

Like sprints, releases in FuSca:

- Are a set cycle length, as short as possible, four months or less.

- Have a finite amount of work planned for completion, which data shows is realistic.

Note, however, that you do not have to be using any of the FuSca approaches to agility to benefit from this one for multi-team planning. You just need a reliable output number for requirements per week or several weeks. Replace the words “epics” and “user stories” with “features” and “work packages,” for example, and FuSca Release Planning should work for you.

Manage Expectations through Transparency

I stumbled upon a simple truth many years ago: People can handle bad news; what they can’t handle is bad surprises. After I had said that a few times at one company, it spread until it was quoted back to me by people who didn’t realize they were quoting me! That suggests the universality of the concept. Certainly people became much more proactive in communicating about problems at that company.

A key to Full Scale agile is managing expectations via transparency. In FuSca, we do not lie to a customer by telling them something can be accomplished by a given date long before we can state that with high confidence. Instead we practice the level of communication intended in the Agile Manifesto, implemented in FuSca through:

- Frequent contact with the Customer to identify or modify the requirements.

- Invitations to each Joint Demonstration Ceremony.

- Access to the same progress reports your executives can see.

- A contract structured to embrace change.

We only name a “Release Date” when highly confident we will hit it. Generally this means two or three sprints in advance, which still beats the reality of most waterfall projects. The customer knows, in real-time if desired, the reason for our confidence, and knows as soon as we do when problems arise.

Under the FuSca approach, the customer may not like the bad news that their desired date can’t be met, but it won’t be a surprise. When they are surprised, it is for the good reason that you deliver more requirements than planned. Or maybe, sadly, the real surprise will be that unlike most suppliers, you actually did what you said you would do!

Mind the Caveats

This release-planning section of the site leads you through the process of using Scrum to plan releases. Bear in mind two warnings.

As previously stated, I do not recommend that teams new to formal work management like Kanban or Scrum try this immediately. The release steps add to an already high learning curve, and release estimates will not be accurate until you have a consistent requirement output rate in all teams (“velocity” in Scrum terms, or “throughput” in Kanban). Combined, those two facts mean the costs outweigh the benefits until you understand Scrum, Kanban, or similar methods at the team level.

Also, managing multi-team releases is extremely difficult using paper trackers, so much so that I won’t do it. Inaccurate data is highly likely due to transcription errors. When teams are not collocated, significant effort is required to gather the needed data. There is no easy way to identify and coordinate dependencies between teams. For a single team, it’s manageable. In multi-team programs, invest in a digital tracker if you haven’t already. See “The Digital Option” and perform the relevant steps for that section.

⇒ Steps: Set Up Tracker for Releases

Allow for System Testing

The Reality for Many Programs

Every waterfall project I have been on had something like a “Testing” phase. Some testing occurred all along, but some types were considered impossible to do until all of the development work was finished. The reason is pretty obvious in projects involving hardware. Computer modeling and prototypes only get you so far; ultimately you have to test many of the real thing.

Every waterfall project I have been on had something like a “Testing” phase. Some testing occurred all along, but some types were considered impossible to do until all of the development work was finished. The reason is pretty obvious in projects involving hardware. Computer modeling and prototypes only get you so far; ultimately you have to test many of the real thing.

In software, waterfall theoretically requires a “code freeze” after which no new functionality can be introduced into the version about to be released. Then full-time testing teams check to make sure the product works… as intended… without breaking older functionality or data that might use it. The development teams wait for defects to come in, fix them, and the test teams run the tests again, and in theory the cycle continues until no bugs are found. In practice, I have never seen the code actually get frozen, causing versions to be released late and/or without thorough testing. This results in a lot of finger-pointing about who is to blame for the late releases and quality problems.

In the software world, a lot of effort has been put into fixing the issue by automating most of the testing and running it continuously throughout the release period. This is the right way to go if you can get there. Yet everything I read on the Web and hear in Agile meetings suggests most organizations are nowhere near this ideal mentioned earlier. And Agile experts who have only worked in software don’t seem to realize that is a fantasy in many settings where Scrum can be used. When hardware is involved, testing can take months. Equipment that sits outside and/or has electricity running through it faces long testing periods to ensure it can withstand the weather and not kill anybody. A company that produces a lot of options in its physical products must test its firmware and software in every combination, and may have limited test equipment. This can require physical reconfiguration of the test bed. User acceptance testing (UAT) is a part of support and implementation projects, and by definition is done by people not tied to an organization’s Agile goals. Month-end closings and negotiated contracts must go through executive reviews before acceptance.

Since any of these scenarios can leave development teams in limbo, FuSca instead treats those results as defects to be addressed by the normal means. These will be minimal under FuSca due to the emphasis on understanding epics before development and building in quality during. Some approaches to Agile-at-scale kept a waterfall-like “hardening” phase in their release cycles to address these periods, but FuSca instead overlaps the releases in those cases to keep developers driving forward.

Phases, Releases, or Nothing New

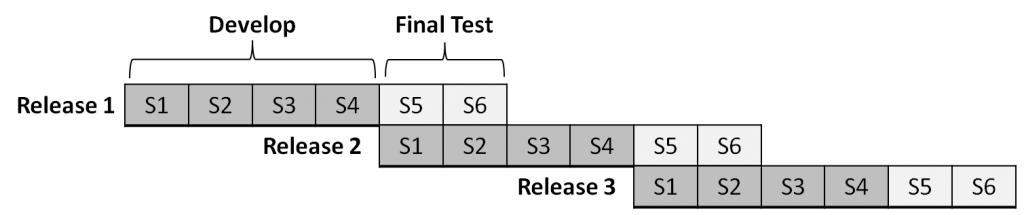

For system testing handled by the team that cannot be completed automatically and continuously, determine how long it takes after development ends. Then break your releases into waterfall-like phases with the second covering that number of sprints, but overlapping with the development phase of the next release. One program I worked with needed two sprints to complete its system testing. We broke each release into a development phase of four sprints and two sprints of system testing. The development phase of the next release started at the same time as the final testing phase of the previous release, like this:

Separate testing teams should be Scrum teams and should be testing continuously, catching and reporting as many defects as possible during the Development Phase of each release. For those using this site’s Agile Performance Standards, note that defects in this case are considered “escaped” from the development teams; those that get to customers have escaped from the testing teams. This prevents the “QA will catch it” attitude I have observed in some development teams.

However, as discussed at “Full Stack Capability,” testers should be embedded in the development teams—in other words, in FuSca there are no separate test teams. In this case, the testers get together to create stories for system testing, but pull each they volunteer for into their separate team backlogs. As with separate teams, this should be going on throughout the sprint, using generic system test stories in their team sprint plans to set aside time in member capacities. During the final testing phase the stories are still mostly for the previous release, and then they shift to the current release. Creating those stories take some time up front, but then you can create templates from them and simply make copies for each new sprint or release.

Those long hardware tests may instead take an entire Planning Release to complete. In that case they will be their own epics, but nothing else changes. Testers, whether as a function or on separate teams, create sprint-length user stories for the work and report negative results as defects the developers must address quickly. Those stories may have a waterfall-like phasing that is unavoidable due to the length, however. A model that has worked well in different settings is to have:

- A story to set up and start the test.

- Small one- or two-task stories to check the test and report any interim data for as many sprints are needed.

- A final story to compile and analyze the data and break down the test apparatus if appropriate.

Notice that each “phase” results in a deliverable, as required of all user stories.

Reviews or testing outside of the program, such as UAT, require no stories! By definition, the team isn’t doing anything, hence there is no need to set aside capacity. If the reviewer/user finds problems, the only time required of the team is that needed to create a defect. The defect then goes through the normal triage and planning process for which you have already set aside time. Of course, the “no escaped defects” standard means the goal is to get zero defects from that process.

↑ System | ← Use Kanban for Flowing Work | → Prepare to Scale

Contents

Contents